How CMS Evaluated and Implemented Its Security Data Lake Strategy with Snowflake

The Centers for Medicare & Medicaid Services (CMS) is a federal agency within the United States Department of Health and Human Services (HHS) that administers the Medicare program and works in partnership with state governments to administer Medicaid, the Children's Health Insurance Program (CHIP), and health insurance portability standards. CMS is the single largest payer for healthcare in the United States. To visualize the scale, CMS currently provides health coverage to more than 100 million people through Medicare, Medicaid, the Children’s Health Insurance Program, and the Health Insurance Marketplace.

The security organization at CMS is responsible for a variety of priorities, from national security-oriented C-SCRM work to security operations that generate a great deal of security telemetry. The CMS security organization also conducts continuous diagnostics and mitigation (CDM), where they perform control assessments on authorized systems.

Challenges with security data at CMS

Security data at CMS was traditionally siloed across teams within the organization. There were often parallel efforts to ingest, store, and normalize the same data in multiple ways. These inefficiencies created duplicative, non-universal ways to process various security data streams and resulted in security visibility issues, extra data storage costs, employee hours spent on ETL pipelines, and more.

For federal agencies like CMS that handle massive amounts of sensitive data, it’s important for the security teams to have the comprehensive visibility and consistency in security posture interpretations for greater cross-security team collaboration.

CMS Chief Information Security Officer Rob Wood and Chief information Architect Amine Raounak led an in-depth presentation on their security data lake initiative with Snowflake. Watch the full video here.

The Snowflake Data Cloud as CMS’ security data lake

The “Mission Enablement '' workstream at CMS was created to identify security areas within the organization that would benefit from an engineering-first and data-driven approach. The security data lake project enables data-driven decision-making for system and product security at scale within CMS. This has become a central pillar that spans across anything that generates security telemetry within CMS, with an objective to then securely expose and democratize access to all security telemetry within CMS in a multi-tenant fashion, leveraging Snowflake’s row-level filtering and RBAC features.

CMS decided to build its Security Data Lake on the Snowflake Data Cloud so that all security stakeholders could have well-governed access to their security streams. This single, multi-tenant data repository provides not only a more cost-effective way to operate, but enables data analytics of a scale never before seen at CMS.

According to Raounak, “Today, data is the first-class citizen and not the security tools. Tooling should get closer to the data rather than confining the data within the boundaries of a given tool. When it comes to security at CMS, it should never be about a single tool and its capabilities; it has to be about making a data-driven, sensible, risk-driven culture, and the data lake is the solution we believe will fulfill this promise.”

“We’ve been able to design a security program with an architecture that fits our needs. Our security data lake is built on Snowflake’s open ecosystem and supports the right security tools to contribute to a risk based approach,” said Raounak.

Architecture Implementation

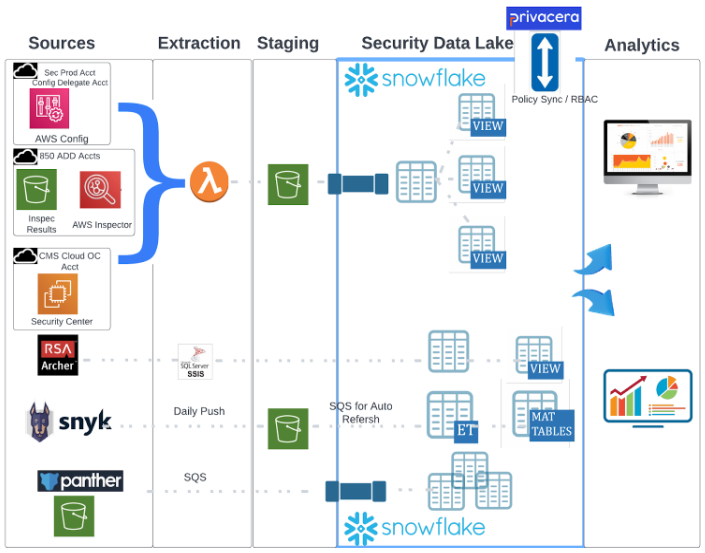

Snowflake enables CMS to have the ability to collect data up and down the stack to get as much context as possible, including but not limited to cloud, network, database, host, and application data.

As shown in Figure 2, Snowflake is the single, central place that CMS stores and operationalizes the security telemetry from a variety of different sources contributing different types of security data.

Broadly, the security data types includes but not limited to:

- Configuration data from CMS cloud ecosystem via AWS Config

- Vulnerability telemetry data from over 850 AWS accounts across CMS

- Compliance data from the GRC tool RSA Archer

- Software composition analysis (SCA) data from Snyk

- Security center data from Tenable

- Vulnerability telemetry data and code dependency monitoring results from Snyk

- Threat detection data from Panther that provides normalized and enriched security data, historical data, and event logs, enabling detection as code

Using Snowflake as the security data lake empowers the security teams across CMS to tap into the single, central, and authoritative source for all their security data needs and investigations. This ensures that there will not be any duplicative work with ingesting and transforming security data, resulting in more efficiency and time saved. In addition, the security teams across CMS can correlate data and events for faster investigations rather than worry about where a given data set is and moving data from one place to another.

Benefits and use cases enabled by Snowflake security data lake

Enabling multiple security teams with a single source of truth

CMS has independent security teams operating throughout the agency, and they are typically managing their own team operations and storing their own data. If these independent security teams wanted to enrich their data to compare and contrast operational states, assess security readiness, contrast their vulnerabilities to threat intelligence information, or conduct proactive threat hunting on their own, they would require the external data set to be moved from its existing location to the SIEM where it can be analyzed.

Now with the Snowflake Data Cloud as CMS’ security data lake, these independent security teams can easily get their data enriched with enterprise data by exposing those data sets in a secure environment. Security teams can immediately begin writing queries for analysis.

Reacting to vulnerabilities in minutes

CMS recently conducted a post mortem exercise for the log4j shell vulnerability. The security team needed to find systems throughout their environment that were running specific versions of a library. When a data call went out, previously teams had to self-report if they had a log4j by various means, such as manually going through software bills of materials (SBOMs), a software composition analysis (SCA), or dynamic scanning, and typically this would be a weeks-long effort for multiple teams, which often included:

- Product teams

- SOC teams

- Reporting teams

- Engineering teams (to pipe the data into a consumable report)

This manual process was cumbersome and took the organization weeks to determine which assets were affected because each team was working in a silo within their own jurisdictions.

If CMS had already had a security data lake with Snowflake in place during the Log4J vulnerability incident, CMS could have quickly addressed the issue in minutes with a simple SQL query answering where and what the potential threat exposure was, because all the security data would have been aggregated in a single place. For example, systems running Snyk could have loaded the output data into Snowflake. Other data, such as SBOMs from any system or piece of technology that was not running Snyk, could also be ingested into Snowflake for a holistic view. The ability to have consolidated data in a security data lake could have enabled CMS to understand what systems were running vulnerable libraries within minutes instead of weeks.

Ensuring scale and performance for a long-term strategy

Snowflake allows CMS to inexpensively ingest any scale of data and collect data without having to define the structure of the logs at the time the data is captured. This enables CMS to store very large data sets that are rarely accessed to be stored as-is without having to spin cycles to parse prior to ingesting the data into Snowflake. For example, the VPC flow logs are voluminous and rarely accessed. CMS stores these in Snowflake unparsed knowing that it can still be accessed at an attribute level using Snowflake's schema-on-read capability.

Snowflake also supports detection engines such as Panther, which provides real-time ingestion. Panther will ingest and transform the data, run available out-of-the-box detections on the data, and then store the transformed data in Snowflake for future queries. Snowflake’s flexible, highly scalable, and performant engine enables large organizations like CMS to build a long-term security data strategy in the Data Cloud.

Opening up new opportunities

Snowflake plays a pivotal role within CMS’ security strategy as the single, central authoritative source of truth for all security data, housing a variety of different types of security telemetry to provide a 360-view for all security data needs within CMS, and which is capable of catering to multiple security use cases.

“Instead of getting subjective answers, there’s a big push to move toward attestable, verifiable artifacts that we can use in the system authorization process. It’s quick and concretely queryable. Even if the data doesn’t exist right now, the beautiful thing about our architecture is that it’s very easy to onboard new data sets, even for an organization as large as CMS,” said Wood.

CMS is now in a position where Snowflake can simultaneously open up opportunities for machine learning model development. Data scientists can do more cross-sectional analysis of the data they have and quickly extrapolate how different things relate.

In the future, Raounak anticipates a complex security question such as “What accounts or what systems are fronted by WAF (Web Application Firewall)?” will be answered by a simple query from the security data lake. The effort of finding security data lineage or issues with security data is now translated to a simple use of the commonly used ansi-sql to query the security data lake, instead of having to learn and use proprietary languages required by specific tools, or to employ any domain-specific language.

If you’d like to learn how you can leverage Snowflake as your security data lake to support use cases from incident response and compliance to vulnerability management and cloud security, please contact your Snowflake representative or visit our website.