FEATURE

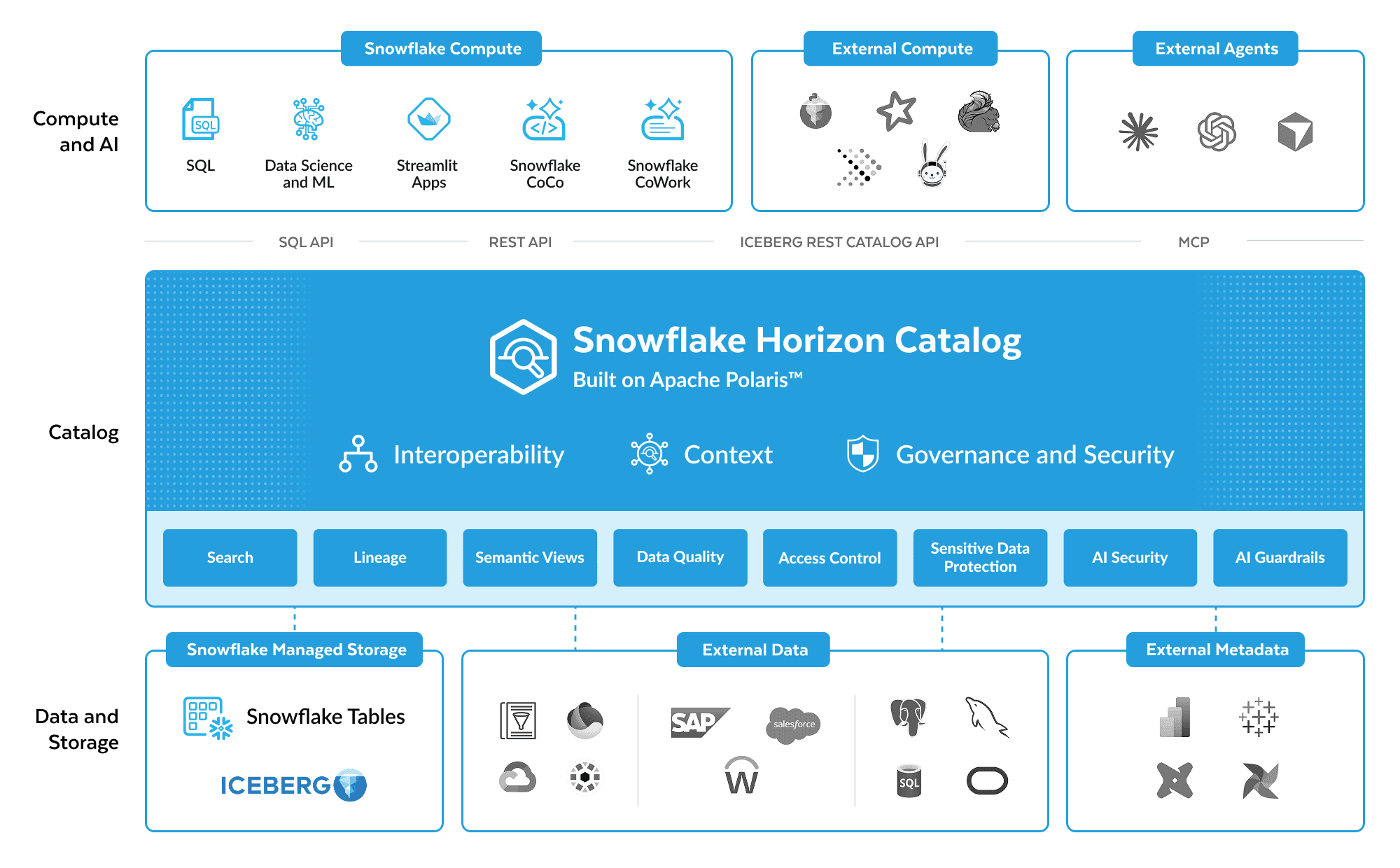

Snowflake Horizon Catalog

Govern and find data and AI assets across Snowflake and external catalogs. Enterprise context, lineage, data quality monitoring and AI guardrails mean every answer is traceable and trusted.

Connect your entire data estate

One catalog for data inside and outside Snowflake. Open table formats. Any compute engine.

Build your AI context layer, automatically

Collect, enrich and activate context across BI and data so humans and AI operate on the same trusted semantics.

Deploy governed, trustworthy AI

AI guardrails detect and block sensitive data before it reaches users. End-to-end lineage traces AI-generated answers back to the source.

BENEFITS

Horizon Catalog: Built for trusted answers

Universal Agentic Catalog

Ask Snowflake CoCo in plain language. Get trusted responses based on your data.

Convert intent into active Horizon Catalog policies for masking, access controls, data quality and more.*

Classify, tag and add documentation and even create semantic models with a simple prompt.

Query data across Snowflake, external data lakes and external relational databases without switching tools.

Connect to any Iceberg catalog to discover and act on fresh data — reads and writes.

A connected data estate

A truly open and interoperable catalog

Benefit from community contributions by choosing the catalog implementing open APIs from Iceberg and Apache Polaris™.

Work off a single governed copy of your data, on any catalog, for read and write operations from Snowflake.

Integrate data sources like external databases, BI and data pipeline systems quickly with out-of-the-box connectors, including PostgreSQL, Tableau, Microsoft Power BI, dbt and more.

A single catalog UI surfaces Snowflake objects, external tables, dashboards and AI assets in one place.

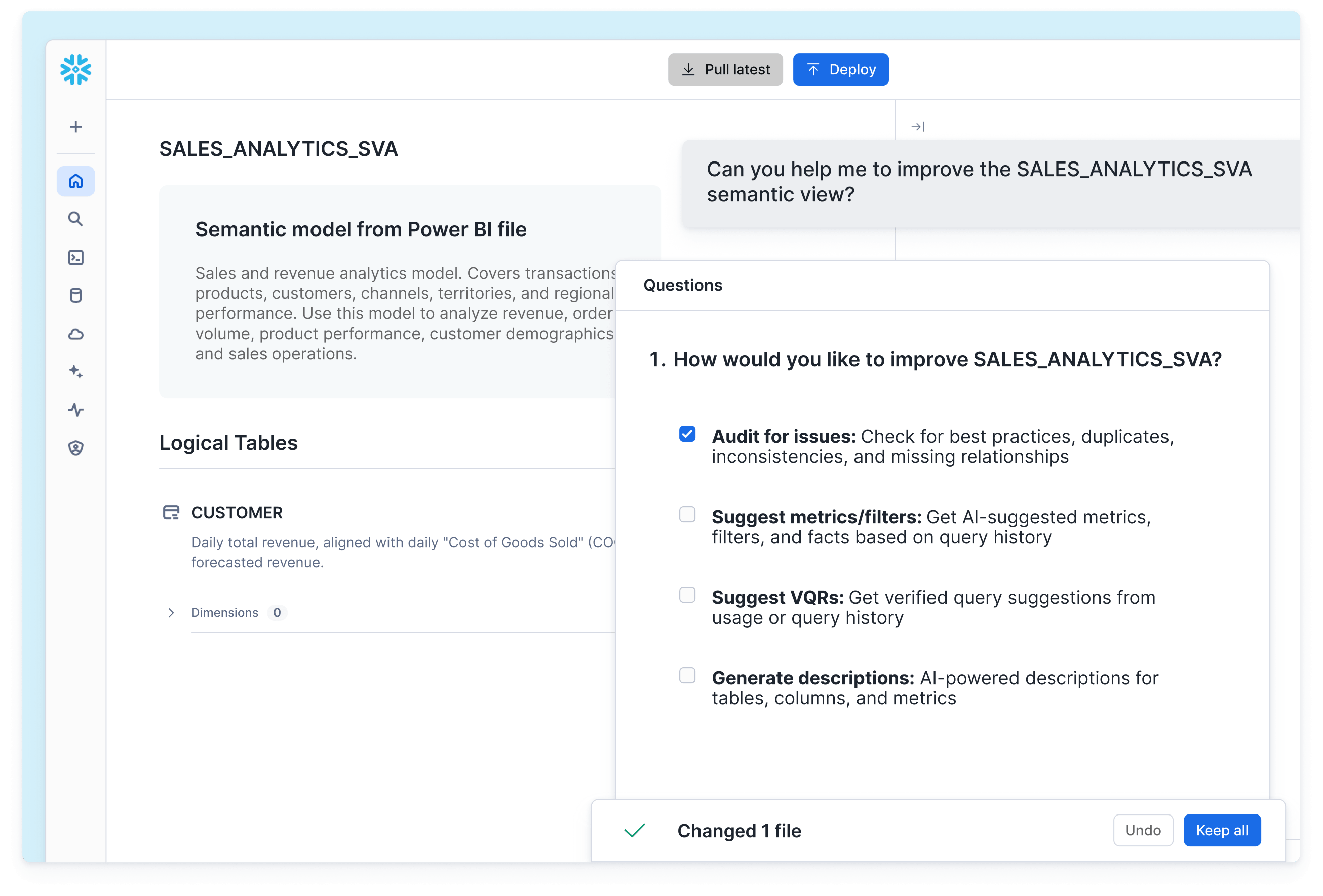

AI context layer, built automatically

Collect, enrich and activate context so humans and AI operate on the same trusted semantics

Gather rich context, such as query logs, popularity, joins and relationships from Tableau, Power BI and other BI sources into one searchable catalog where every engine sees the same definitions and lineage.

Track column-level lineage across Snowflake and external databases, rank assets by popularity and auto-generate documentation from metadata.

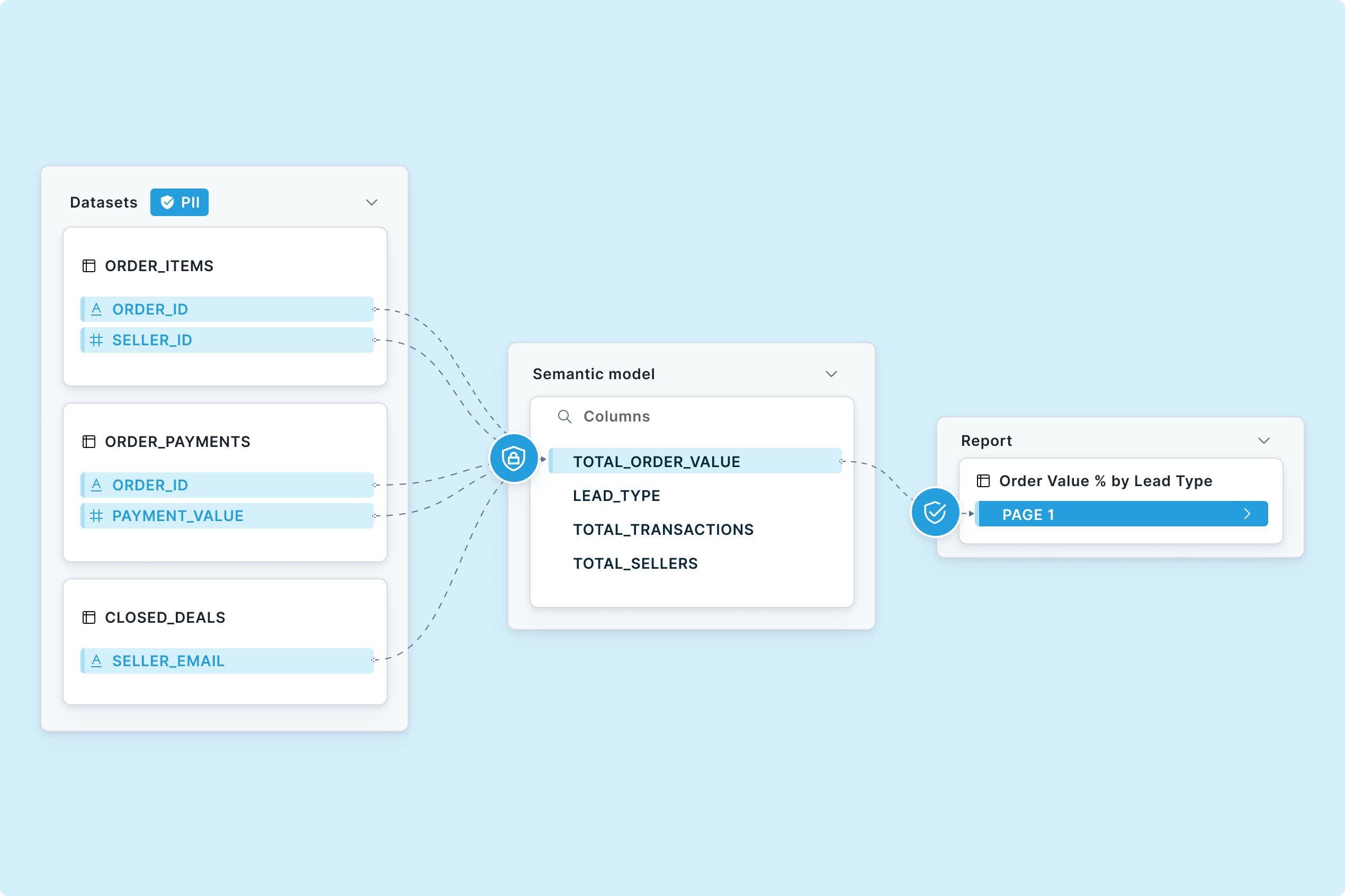

Semantic View Autopilot creates business-ready definitions from your existing BI sources; Semantic Studio adds git versioning, and CoCo edits in one workspace.

Open Semantic Interchange keeps business logic consistent across any tool or catalog.

Deploy governed, trustworthy AI

Every answer is backed by secure, policy-enforced data

Agents operate under the same RBAC policies as humans. An agent dashboard shows all active agents, MCP connections and policy status.

AI Guardrails detect, redact and block PII from agent outputs before they reach users or tools.

Data quality monitoring and lineage ensure every query hits fresh data.

Masking and row-access policies defined in Snowflake are enforced for data queried through any Iceberg REST Catalog-compatible external engine.

Sensitive data monitoring discovers and classifies data, applying tags and policies that keep PII safe.

Advertising, Media and Entertainment

Merkle Improves Customer Experiences While Providing Data Governance and Security

Merkle, a dentsu company, consolidates sensitive data and collaborates with clients in Snowflake, resulting in a more efficient, trusted data environment that expedites data access and reduces risk.

- 64% Faster data development cycle

- 20% Estimated cost savings

Your Governance Resources

Resources, partner integrations and community expertise to help you govern data at scale.

See Horizon Catalog in Action

See live demos of Horizon Catalog bi-directional interoperability, governance for AI and semantic views.

Snowflake Community

Meet and learn from a global network of data governance leaders in Snowflake’s community forum and Snowflake User Groups.

Snowflake Documentation

Explore Snowflake documentation for features, tutorials and a detailed reference of commands and operations.

Resources