SAP applications are rich sources of extremely high value data for any organization running SAP applications. But this data is not only valuable to the company running the SAP application. It’s just as important, if not more important, to the customers, partners, and vendors that the company works with. According to SAP, 77% of the world’s transactions revenue touches an SAP system at some point, and if you are reading this blog, you are most likely one of the thousands of customers using SAP to run your business.

The goal of this blog is to explain how to work with SAP data in Snowflake Cloud Data Platform as part of an ELT workflow. It has two parts.

Part 1 provides an introduction to the technologies—HVR and Snowflake—used to enable the workflow and explain how HVR can replicate the data from SAP into Snowflake.

Part 2 presents a method for transforming the replicated SAP data in Snowflake into a dimensional star schema.

The workflow covered in this blog focuses on financial data coming out of SAP ERP, but the method presented applies to almost any component in the SAP ecosystem, including but not limited to:

- ERP (Enterprise Resource Planning)

- CRM (Customer Relationship Management)

- PLM (Product Lifecycle Management)

- SCM (Supply Chain Management)

- SRM (Supplier Relationship Management)

- S/4HANA

- And many more…

Most of the customers I work with are looking for faster, cheaper, and easier ways to get more value from their SAP data. They want to combine SAP and non-SAP data, run analytical workloads, run machine learning workloads, perform historical analyses, and mine for deeper insights.

Historically, best practices have dictated that transaction processing (OLTP) and analytical workloads (OLAP) be kept separate, and that is as true today as it was 20 years ago. Regardless of the database underlying your SAP applications, you cannot effectively run large, resource-intensive OLAP workloads in the same database running your OLTP applications without running into resource contention. The most effective option is replicating data out of the transactional source systems and loading into a data warehouse.

This is where HVR and Snowflake come in.

HVR

HVR is a real-time data replication solution that organizations such as 1-800 Flowers and Suez choose because of its ability to replicate large volumes of data from a variety of sources into cloud data warehouses. It is well-suited to deliver data from operational systems, including SAP, into an analytical environment for several reasons:

Efficiency

Log-based Change Data Capture (CDC) is an efficient approach to retrieve incremental change data in real-time, without impacting the transaction processing system. HVR supports log-based CDC for numerous database technologies, including ones commonly used for SAP, like Oracle, SQL Server, DB2, and SAP HANA.

Performance

HVR is deployed in a distributed architecture, achieving optimizations with respect to:

- Access to the data source and transaction logs

- Network communication, with data always compressed on the wire

- Delivery of change data into the target, implementing best practices for fast data loads

In distributed setups, HVR has demonstrated processing change data volumes of a few hundred GBs per hour for a single source.

Security

Moving data between systems and especially into the cloud introduces a risk of data breaches. HVR’s distributed architecture features data encryption on the wire, as well as flexible options for authentication and the use of a proxy to facilitate as little need as possible to open the firewall.

Flexibility

HVR provides an efficient way to augment data on the target and perform common row-level transformations:

- Include extra columns with information like replication timestamp, source system name, etc.

- Transform delete operations from the source into soft deletes to mark the row as deleted.

- Create an audit trail of changes for every row change.

- Decoding of SAP’s cluster and pool table data.

Snowflake

Snowflake Cloud Data Platform is built from scratch to run in the cloud whether it’s in AWS, Azure, or most recently, GCP.

Snowflake is a great for managing and making sense of SAP data for many reasons:

Ease of Development

Snowflake is a SQL data platform, which means if you know how to write SQL, you can start building data pipelines and analytics immediately. There is no hardware to procure, no software to install and configure. When you sign up for a Snowflake account you instantly have a secure platform with infinite scalability.

Performance

Snowflake outperforms traditional methods for executing data workloads. Compute resources scale linearly in Snowflake, while efficient query optimization delivers answers in a fraction of the time of legacy cloud or on-premises systems. Performance challenges can be addressed in seconds. You can specify the size of a compute cluster based on the performance you initially require. But you can resize at any time, even while a workload is running.

Scalability

Snowflake storage can scale to any size you require because our persistence layer is hyperscale cloud provider blob storage.

For compute, Snowflake allows customers to create virtual warehouses (compute engines) which can run multiple workloads against the same data without a contention for resources.

With the introduction of the multi-cluster data warehouse feature, Snowflake allows customers to dynamically or automatically scale and load-balance increasing/decreasing number of concurrent users (queries). Multi-cluster warehouses deliver a consistent SLA to an unlimited number of concurrent users.

Data Sharing

In the opening, we referenced how valuable SAP data is both internally and externally to an organization. Snowflake Data Sharing is a powerful yet simple-to use feature of Snowflake for sharing data and for using shared data. In a matter of minutes, you can provide live access to any of your data stored in Snowflake for any number of data consumers, inside or outside your organization, without moving or copying the data. Share data across corporate divisions, external data consumers, and business partners to easily support richer analytics, new business models and data-driven initiatives.

Snowflake Cost Model

Per-second, usage-based pricing for compute and storage means you pay only for the amount of data you store and the amount of compute processing you use. This translates to no large upfront costs, over-provisioned systems, or idle clusters consuming money.

Developing on Snowflake is Fun

Snowflake is a brand-new platform built from scratch in the cloud to take advantage of the capabilities the cloud makes available. With no hardware to worry about, software to install and tune, and a large and growing ecosystem of technology partners, like HVR, developers are freed of mundane data warehousing tasks and are free to develop and innovate with ease in ways that simply weren’t possible in legacy technologies.

Snowflake also supports modern programmability through multiple ways of connecting to the service. This includes ODBC and JDBC drivers; support for Python, Spark, Go, and .NET; and connectivity using Kafka and Spark Streaming to name a few.

Data Replication from SAP

HVR supports log-based data replication from SAP applications when the database hosting SAP is one of HVR’s supported databases including Oracle, SQL Server, DB2, and SAP HANA.

The SAP ECC software suite, first released in 2004 and supported at least through 2027 with extended support till 2030, is still the most commonly deployed version of SAP today. Many ECC deployments feature three different types of tables storing ERP data:

- Transparent tables, with a one-to-one mapping between the way SAP looks at the table, and a database table. Data in a transparent table is readily readable in the database.

- Pool tables, with multiple application tables mapped to a single database table, and data stored in a binary, compressed and encoded format.

- Cluster tables, with one or more application tables mapped to a single database table, and data stored in a binary, compressed and encoded format. A single record from the application perspective may span multiple rows in the database table. Some of SAP’s most important data resides in cluster tables.

HVR’s log-based data replication always uses the database transaction logs to determine application changes, irrespective of the table type. For pool and cluster tables, a separate SAP Transform is used to decompress and decode the data so it can be used in SQL-based systems like Snowflake that have no ability to execute SAP’s proprietary ABAP code. Note HVR’s SAP Transform does not use ABAP and requires no access to SAP’s application servers. Rather, the transform runs downstream in the replication flow, away from the SAP application.

HVR groups tables that are replicated as a transactionally consistent set in a channel. Generally, HVR imports table definitions from the database’s data dictionary to add tables to the channel. For an SAP ERP source, HVR integrates with SAP’s dictionary to support the up-to-date definition of pool and cluster tables, including any custom Z-columns that may have been added (for an example see figure below).

To facilitate downstream processing of the data, you can add two extra columns to all tables on the target:

- is_deleted, to mark rows that are deleted from the source as deleted rather than physically deleting them (soft delete)

- r_datetime to record the timestamp when the row was applied to the target

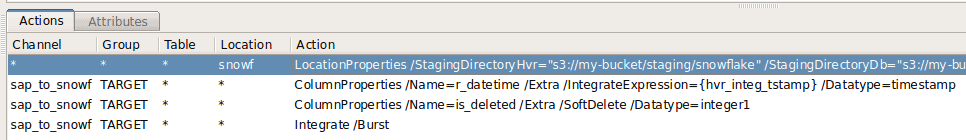

HVR uses actions to define these extra columns as shown below.

Once the definition of the channel is complete, use HVR to:

- Initialize the replication and start capturing changes.

- Refresh the target for the initial load. Note HVR can create target tables for you, mapping source data types to compatible, loss-less data types on the target. The initial load and CDC are aligned to ensure no changes are lost and also no duplicates are applied.

- Compare the data as needed.

With data replicating into Snowflake, we are now ready to model the data in Snowflake which is Part 2 of the blog. Learn more about getting started with Real-time Data Streaming to Snowflake with HVR.

Mark is the CTO of real-time data replication solution provider, HVR. Mark has a strong background in data replication as well as real-time Business Intelligence and analytics. Throughout his 15+ year career, Mark has expanded his responsibilities from consultant to product management and business development.