This year’s Snowflake Summit featured a slew of exciting announcements that furthered Snowflake’s vision of connecting the world’s data. To help organizations maximize the value of data, including unstructured data and third-party data, Snowflake continues to enhance data programmability advancements that provide more flexibility and greater extensibility for users across programming languages and programming models to leverage Snowflake’s Data Cloud for machine learning (ML).

Our ecosystem of data science partners has taken the lead in leveraging these advancements to enhance users’ experience across many steps of the data science workflow, including:

- Feature engineering

- ML model inference

- End-to-end ML in Snowflake with SQL

Accelerating Feature Engineering

Among the numerous announcements, Snowpark and Java user-defined functions (UDFs) stood out with their ability to empower our data science partners to enable joint customers to further benefit from the nearly unlimited performance, elasticity, and scale of Snowflake’s elastic performance engine.

Snowpark is designed to make building complex data pipelines a breeze and empower data engineers, data scientists, and developers who are using code as part of their notebook-based programming in Dataiku, H20.ai, and Zepl (now part of DataRobot’s platform). With Snowpark, they can use their preferred language to accelerate feature engineering efforts by using familiar programming concepts such as DataFrames and then execute these workloads directly within Snowflake. Snowpark is now in public preview for Scala, and future support for Java, Python, and additional languages is planned.

No-code and low-code users building data preparation pipelines in Dataiku also benefit from Snowflake’s engine with Java UDFs. Java UDFs, now in public preview, allow workloads expressed in Java to run in Snowflake in a JVM (Java Virtual Machine). Since Dataiku’s core engine is Java, it’s convenient for Dataiku to repackage data preparation functions and push down data preparation so it’s executed inside Snowflake to effortlessly scale to any amount of users, jobs, or data while at the same time simplifying the architecture.

One last announcement that is relevant to the acceleration of feature engineering is the now the general availability of Snowflake as a data source in Amazon SageMaker Data Wrangler. With this new integration, joint customers can connect and query data in Snowflake right from within Data Wrangler to accelerate data preparation and feature engineering with over 300 built-in data transformations to help normalize, transform, and combine features without writing any code.

Simplifying the Path to Production with Model Inference Inside Snowflake

One area where organizations continue to struggle with their ML workflow is the ability to securely and reliably bring models from prototype to production. To execute model inference at scale, operations teams typically need to move production data from a governed source of truth to a separate environment where the model is deployed. Moving large volumes of data has both costs and security implications that many times prevent models from getting into production.

To address the complexity of moving data out from where it lives, ML development and operations teams can shift to an approach where the model comes to the data. This is now possible through the public preview of Java UDFs. Java UDFs can use trained models, expressed in Java, to run inference inside Snowflake.

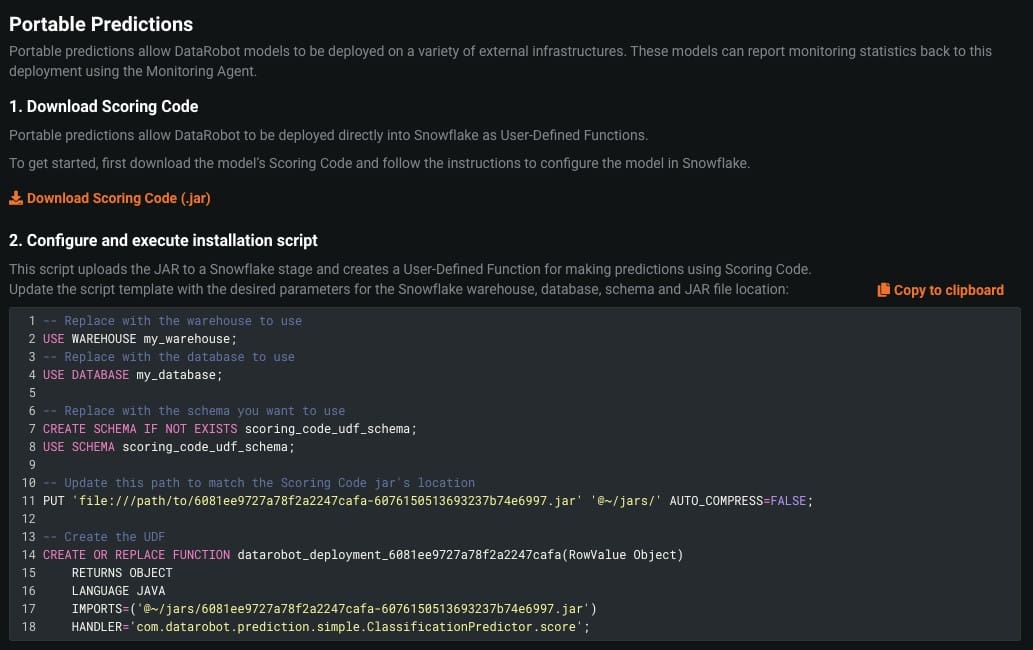

Snowflake data science partners DataRobot and H20.ai already have the functionality to export models as JAR files and deploy them inside Snowflake for scalable, batch-oriented model inference. Dataiku announced this is a capability they are also working on in order to deploy Dataiku models as Java UDFs in Snowflake.

But running a model inside Snowflake does not mean that joint customers lose the ability to use their ML platform of choice for model monitoring. For example, with DataRobot, customers can ingest service and prediction data back into DataRobot MLOps for analysis of model drift over time.

Building and Managing ML Models Natively Inside Snowflake with SQL

Building ML models can often get complicated because of the tools and depth of technical knowledge required to build, train, and deploy the right prediction models. This can be in terms of knowing which model to use, how to tune it, and possibly having to do all this with a programming language that’s not familiar to many analysts and business experts who have extensive domain knowledge.

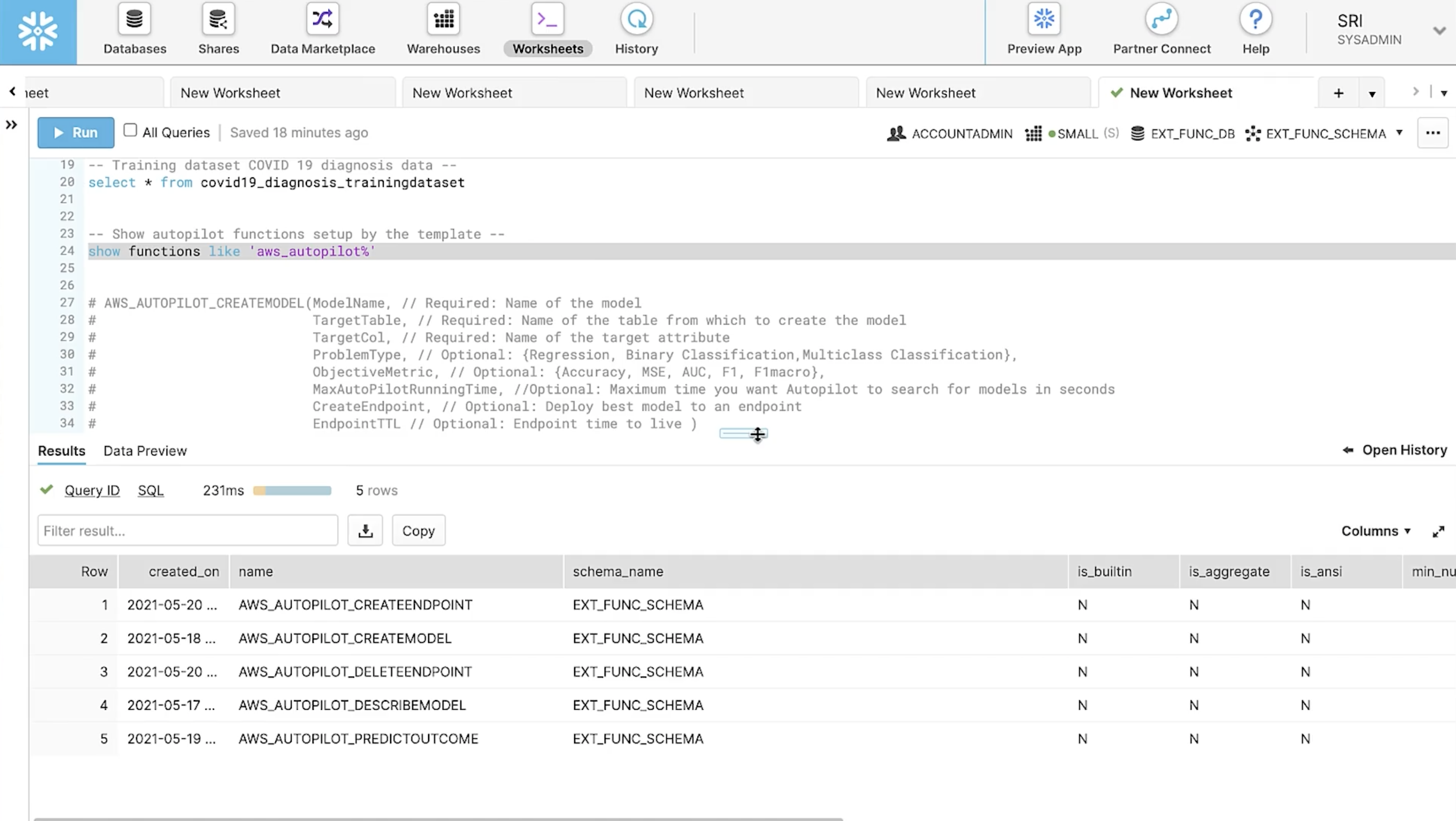

To democratize ML, joint Snowflake and AWS customers can soon bring the power of SageMaker Autopilot to automatically build and deploy the best ML models natively from inside Snowflake using SQL. Once data is prepared and made available in tabular format, SageMaker Autopilot automatically builds, trains, and tunes ML models based on the data, while still allowing customers to maintain full control and visibility.

As part of the upcoming private preview of Snowflake with Amazon SageMaker Autopilot, the native functionality available inside Snowflake also includes the ability to manage models and request new predictions from already deployed models using SQL.

What’s Next?

Learn more about the Snowpark and Java UDF announcements from our partners in the Snowpark Accelerated program including:

Get hands-on for these announcements:

- Visit Snowflake guides to get started with Snowpark with step-by-step instructions

- Go to Partner Connect inside Snowflake to get started with DataRobot, Zepl (part of DataRobot’s platform), Dataiku, or H20.ai

- Register for the upcoming demo of Snowflake with Amazon SageMaker Data Wrangler

Sign up to get a spot in the private preview of Snowflake with Amazon SageMaker Autopilot and shape the future of ML with SQL

Forward-Looking Statements

This post contains express and implied forwarding-looking statements, including statements regarding (i) our business strategy, (ii) our products, services, and technology offerings, including those that are under development, (iii) market growth, trends, and competitive considerations, and (iv) the integration, interoperability, and availability of our products with and on third-party platforms. These forward-looking statements are subject to a number of risks, uncertainties and assumptions, including those described under the heading “Risk Factors” and elsewhere in the Quarterly Report on Form 10-Q for the fiscal quarter ended April 30, 2021 that Snowflake has filed with the Securities and Exchange Commission. In light of these risks, uncertainties, and assumptions, actual results could differ materially and adversely from those anticipated or implied in the forward-looking statements. As a result, you should not rely on any forwarding-looking statements as predictions of future events.

© 2021 Snowflake Inc. All rights reserved. Snowflake, the Snowflake logo, and all other Snowflake product, feature and service names mentioned herein are registered trademarks or trademarks of Snowflake Inc. in the United States and other countries. All other brand names or logos mentioned or used herein are for identification purposes only and may be the trademarks of their respective holder(s). Snowflake may not be associated with, or be sponsored or endorsed by, any such holder(s).