We all know we’re living in challenging times—the economy, global politics, the environment. Not to make light of anything happening today, but this isn’t the first time businesses have faced difficult times. The financial crisis of the early aughts is still relatively recent history. These crises caused a retraction in the economy and a slowdown of many technology investments. But one area that has consistently remained resilient is investments in data and analytics technologies.

Why? Because business success in times of crisis, as is the case today, requires unprecedented levels of customer service and operational agility. To meet these challenges companies must have a deeper understanding of customers and their business, from both inside and out. And, that requires better insights from more data and from diverse data.

Decision-makers know that their own internal data is not enough. In today’s dynamic environment, decision-makers want to get any insights they can—from any source—to create differentiation and competitive advantage. The end of internet cookies has further accelerated the growth in demand for new data sources. Bottom line: Data ecosystems are growing.

According to a recent survey by data science company Explorium, 44% of firms acquire external data from five or more providers. That’s up from only 9% the previous year. Budgets for external data are significant and growing. In the same study, 22% of respondents said they were spending over $500K on external data, with 13% saying they spent over $1M (up from 7% in a similar survey in 2021).

As demand for external data spreads across organizations—from IT to marketing to sales to operations—can data teams find the data their business peers need? Can they keep up with requests? As these data ecosystems grow, now is the time to get data sourcing right.

Hunt for new insights

The request for external data might not come wrapped up with a bow or with exact specifications. In fact, it might not be expressed in terms of data at all. It’s often more of a wistful “I wish I knew…” type of statement. In other words, you might not know what you are looking for but you likely know what you want to do with it. The first challenge is to capture as much detail as possible. Will the data be used to inform a descriptive customer segmentation, to enrich a predictive model, to correlate outcomes for a prescriptive model? What is the level of granularity or frequency needed? The next challenge is to scour the data landscape to find unique new sources.

Start by breaking down internal data silos

One of the advantages of the data mesh paradigm is thinking about data as a product. When companies shift their mindset from storing data to using data, the opportunities for data sharing explode. Customer 360 initiatives bring data together from across divisions like sales, marketing, customer service, and finance. A product 360 can help identify defects, predict maintenance needs or returns, and streamline operations. The general rule of thumb has been that about 70% of data lies unused within the enterprise—much of it unstructured in emails, chats, reports, or customer service transcripts. As data product thinking collides with new tools such as machine learning and cognitive search, these data sources will become increasingly accessible.

Move on to data sharing outside company walls

Data sharing across a partner ecosystem just makes sense. Kraft Heinz shares data with retailers in “joint value planning”—a win-win collaboration to provide consumers with the right products at the right time, in the right place, and at the right price, making sure to avoid “out of stocks.” Advertising is another huge data sharing ecosystem. Media outlets share data—often through secure data clean rooms—with advertisers who want to tailor messages to specific target audiences. Being able to share data securely, without revealing personal information, opens the door to innumerable opportunities for collaboration across broader ecosystems.

Leverage data marketplaces

Established marketplaces facilitate discovery of different data sources, such as industry benchmarks, local weather patterns, economic indicators, health trends, environmental statistics, and more. For example, through Snowflake Marketplace potential buyers can browse data products by industry and use case, and even take the data for a test drive. The try-before-you-buy function allows buyers to test whether a new data set complements existing sources or delivers lift in an analytic model. That due diligence offers peace of mind.

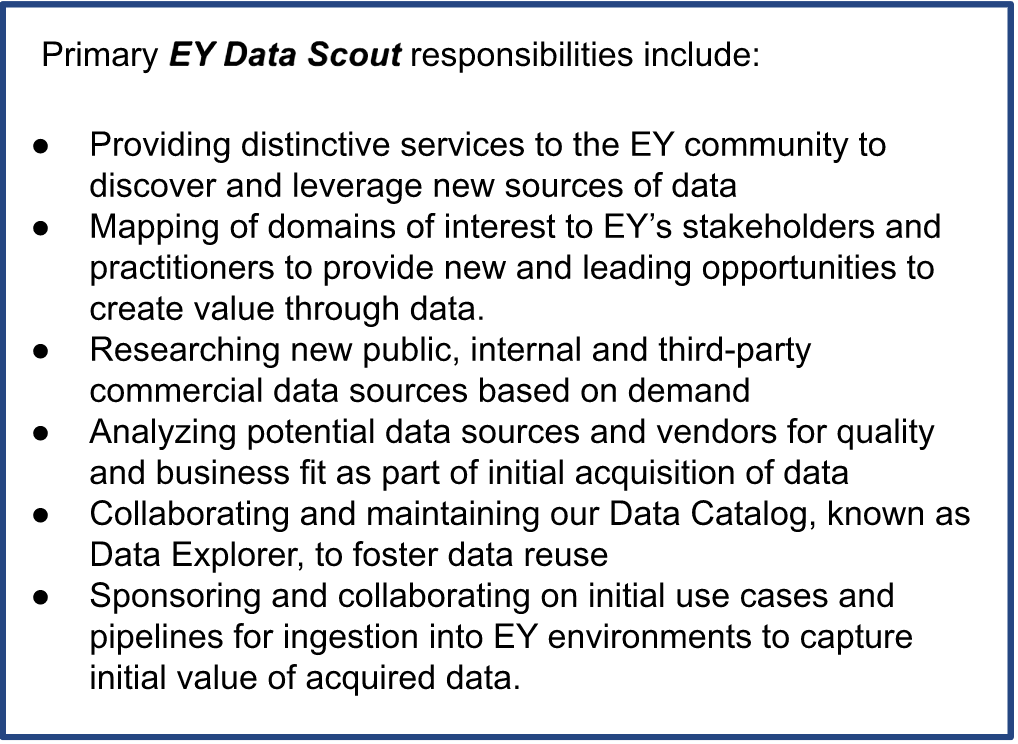

Appoint specialists—a new data hunter or scout—to identify and vet sources

These data sourcing specialists are a hot new data job—or should be for companies serious about expanding data sources more effectively. A quick search of Indeed.com uncovered a Data Scout opening at EY to “explore and evaluate new public, commercial, and internal data sources to enable the Data Office to continue to uncover and support use of desirable data requested by the Firm. New sources are key to many of EY’s service areas and can be used to continually confirm and improve data-driven insights and services offered in support of EY clients.” While not all companies will hire a dedicated “scout,” some will assign the responsibility within the data team or to a council of stakeholders.

Adopt best practices data acquisition

As data acquisition expands across the organization, formal processes can prevent overspending and ensure proper use. Basic data governance provides a set of guidelines: know your data and protect your data in order to unlock the full potential of your data. Governance applies to external data as well as what you produce internally.

- Start with an inventory of external data sources: The first order of business is to know what external data exists within your enterprise. Where data acquisition policies have been liberal or nonexistent, external data is usually lurking in corners around the company. Where data has traditionally been siloed, data leaders might not even know what they’ve got, or how many copies of the same data they have bought. It was not unheard of to have multiple copies of the same data purchased by different divisions, for example, sales, marketing, or finance. In that case, this inventory exercise could be followed by a contract rationalization effort to eliminate duplicate data and save a lot of money.

- Coordinate organization-wide data acquisition: Going forward, ensure that data acquisition is coordinated across all teams. Establish a cross-functional steering committee of stakeholders. These stakeholders could be producers of a data product or the end consumers of the data. Broad representation ensures that all needs are well captured. This cross-functional working group ensures coordination of data requirements and acquisitions.

- Track access, use, and outcomes: Monitor everything. How is the data being used? By whom? For what purpose? And, to what outcome? Capture not only successful use cases but also what doesn’t work. We often hear how important it is to embrace failure; but to avoid repeated failures, it’s important to also capture lessons learned. Data users must capture not only where a data set provides lift to a model but where it does not: which data sets offer the strongest “signals”; which were tested and failed. Tracking access, usage, and outcomes in a data catalog ensures that this information is available across the company. Data access via Snowflake Marketplace also allows for access and usage monitoring. Correlating use and outcomes enables attribution and calculating ROI.

The question is no longer whether you will leverage external data for insights into your customers and your operations and business context. The mandate is that you do it cost-effectively.

Stay tuned for more guidance from Snowflake on best practices in external data acquisition, and join us at Snowflake Summit 2023 in Las Vegas. We’re working on a “data dating” session to bring data providers and potential data buyers together. In the meantime, if you’d like to hear more or share your own stories, don’t hesitate to reach out.