Diverse data delivers richer experiences for customers. Layers of data—of all sizes and kinds—provide insights into customers’ profiles and preferences and how best to serve them. Traditionally bringing all that data together has been challenging, as it required copying and moving it across systems. All that required effort, and raised concerns about the ability to govern the data.

In May, Snowflake hosted a webinar on data collaboration and clean rooms. Snowflake’s data sharing capability provides access to live, ready-to-query data, without having to copy and move it, making it easier to access and to govern. But some data is extremely sensitive or highly regulated.

Snowflake’s enhanced Global Data Clean Room capability, based on features now available in private preview, enables the privacy of data to be maintained while allowing multiple parties to derive insights from it. Data owners define specific rules about which operations can be performed using their data, who can run them, and what the responses can be. Clean room configurations can also include additional privacy enhancing capabilities such as time stamping or the injection of “noise” in the results. Based on these configurations, parties to the clean room can then run their allowed operations against the data without seeing the underlying data or logic. For example, a customer overlap query could report how many common customers two or more parties have, rather than showing who those customers actually are. I call this “sharing without showing.”

Not surprisingly, the audience asked some pertinent questions about how these capabilities work. We’ve asked the experts to provide some answers:

In your experience, do you foresee changes in the significance of data collaboration? What’s next in terms of advancements in data collaboration?

Companies have already been sharing data, just much less efficiently. But current industry dynamics have accelerated demand for sharing and collaboration. In the advertising world, the deprecation of third-party cookies has left many marketers without sources of external data. At the same time, new use cases and data-intensive analytic methods have resulted in an explosion in demand for data. Yet concerns about preserving data privacy have also grown. These dynamics have resulted in a perfect storm: the need for secure data collaboration via data clean rooms.

The evolution of Snowflake Data Cloud illustrates the growing importance of data sharing today. In the illustration below, each dot represents a customer sharing data, and over time sharing has become more prevalent and the connections more dense.

What is a data clean room? Is it a concept, an architecture, or a solution using multiple Snowflake features (such as a row access policy)?

This is another great question. A clean room is not necessarily a “room” at all. Some traditional clean rooms require physical infrastructure; others rely on a trusted third party. But modern data clean rooms are not physical spaces, and don’t require moving data into a different system or environment. In fact, that is one of the advantages of Snowflake. Your data doesn’t need to be moved; it can remain secure and governed within your Snowflake environment.

Snowflake Data Clean Room is a framework, or design pattern, for secure, multi-party collaboration, leveraging core Snowflake features including:

- Row Access Policies and database roles where parties can match customer data without exposing either party’s PII

- Stored Procedures to generate and validate query requests

- Secure Data Sharing for automatically and securely sharing tables between multiple snowflake accounts without the need for movement outside of Snowflake

Data Clean rooms are now built using Snowflake’s Native Application Framework, currently in private preview, which allows you to build an application using Snowflake primitives like stored procedures and data shares, publish it on Snowflake Marketplace, and run it within a customer’s Snowflake instance.

With the introduction of Python UDFs, now in public preview, Snowflake users can now leverage Jinja templates, which allow for defining the structure of a query with substitutable parameters like group by attributes, joins, and where clauses. This is what we refer to as Defined Access.

The Snowflake Product Team is working on query constraints that will enable ad hoc use cases while allowing your data to remain protected.

Snowflake continues to invest in capabilities that extend clean room functionality.

Can you use Snowflake Data Clean Rooms across different clouds (AWS, GCP, and MSFT)? For example, when one company is running Snowflake on AWS, another one on Azure, does the data move into the clean room?

Let’s answer the second question first. The short answer is “no.” The data doesn’t move into a clean room. Snowflake data sharing enables direct data access within the Snowflake environment. And, the clean room is a capability that provides additional protection where the data resides in Snowflake, not a separate “room” per se.

The answer to the first question is a little more nuanced. Snowflake does support multiple clouds and regions. However, in order to share data, both accounts must be deployed in the same cloud and region, requiring replication across cloud regions. As a result, to use Snowflake Data Clean Room capabilities, the data must first be replicated to the provider’s account in the desired cloud regions. That said, the new auto-fulfillment feature, currently in private preview, simplifies the extension of data sharing to users across cloud or region. When enabled, auto-fulfillment ensures that the data is automatically replicated to and stays current in the regions you specify.

When using multiple data sources from different providers, would all the purchased data need to be in Snowflake?

Yes and no. Again, the answer is nuanced. All parties need a Snowflake instance. Parties who are not yet Snowflake customers could easily sign up for an account via a simple link. Or the data provider, or host of the clean room, could create a Reader Account for those parties who are not yet ready for their own Snowflake account. Everyone needs a Snowflake account, although they do not necessarily need to be a direct Snowflake customer.

Another option for Snowflake customers who have external data they’d like to share but not upload into Snowflake is to put the data in blob storage, such as AWS S3, and access it via a Snowflake External Table.

Another variant of the question above was asked: Does a customer need to have Snowflake to write data into a shared clean room?

Yes. See above.

How do data collaborators ensure that the rules around first-party data use and governance are also shared, especially around consent? Where does data governance come into the picture?

Snowflake provides the framework for secure sharing and offers a number of data governance capabilities, including partnerships with data governance solutions such as Collibra or Alation.

Within the Snowflake Data Clean Room framework, providers can ensure additional security by adding restrictions like noise, timestamp constraints to reduce the likelihood of jitter attacks or triangulation of small changes in aggregates, and redaction of rows with small, distinct customer counts to reduce the chance of reidentification via “thin slicing”—the ability to potentially find identifiable patterns from a small sample size.

Ultimately, however, the parties sharing data are responsible for applying the appropriate security and privacy rules, such as removing customers that haven’t consented to the use of their data. Any established governance rules can be replicated across Snowflake instances.

I have a proprietary data application that several groups would like to use. They are asking for an API. Can this replace the development of an API?

Yes, Snowflake Data Clean Room capability would allow customers to provide query access to the data live or data application within Snowflake rather than having to build and manage an API. This solution is an easier and more scalable way to share data and data applications.

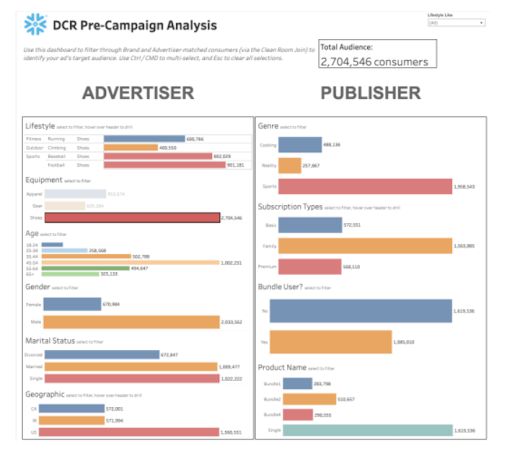

Were the charts and graphs shown in the webinar built within the Snowflake UI or an external tool?

The charts and graphs shown in the webinar were built with Tableau as part of a demo of Snowflake Data Clean Room capabilities. However, there are three options for building these types of user interfaces.

Regarding the allowed statements, do they have to be perfectly identical (even the punctuations, blank spaces in the statement) or not?

The short answer is “no” but only because the statements are not being written each time by the parties to the clean room.

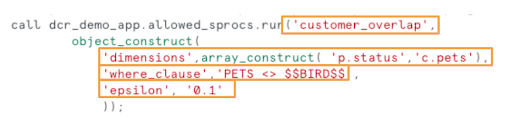

A Snowflake Data Clean Room allows users to execute specific queries against the data. These queries are referred to as “allowed statements.” However, these “allowed statements” are not written and manually entered as SQL statements. They are generated from a query template, created using Jinja, with parameters such as group by and where clauses. Specific parameters are agreed to by the parties and they are executed in a procedure call.

Upon request, Snowflake can share sample Jinja templates for several advertising use cases:

- Audience Overlap and Segment Creation for analyzing aggregate groupings of overlapping customers and building segments for audience planning

- Customer Enrichment to receive enriched data on overlapping customers

- Campaign Measurement to determine conversion rates and return on advertising spend

- Lookalike Segments to grow your target audience by identifying new customers

Here is an example of a procedure call the consumer would make to the provider’s data clean room application.

The analyst selects the customer_overlap template, which finds common customers from the data of the two (or more) parties. The analyst can specify the desired filters, or “group by” commands (in this case “status” and “pets”) and further specifies through a “where clause” that “pets” does not equal BIRD. This particular template includes the injection of noise.

Is the plan to include features that will allow for exporting to various marketing platforms (DSPs, Socials)?

Snowflake does not currently plan on building native connectors to specific marketing platforms at this time but we do support a number of methods for this type of export capability:

- Data sharing if the recipient platform is also on Snowflake

- Export to blob storage (e.g., S3) where the data can be picked up by the consumer

- Pushing data to a consumers API

- Any classic ETL technique for moving data using your existing tools

Is it up to the advertiser to have a “targetable” ID applied to the record or will Snowflake provide a solution for that?

No, you don’t need to have one, nor do we provide it directly.

Snowflake does not provide ID solutions. We have vendors in the marketplace that advertisers can work with, such as LiveRamp and Experian. But the dependency on a particular ID is up to the advertiser and the provider.

What is the implementation path and ramp up?

There are several options for setting up a Snowflake Data Clean Room. Obviously doing it yourself requires more expertise and effort. Getting help from Snowflake and partners can accelerate the implementation. Options include:

- Do-It-Yourself. Some customers have opted to build their own data clean rooms. Typically they have sufficient internal resources to dedicate. For those planning to go the DIY route, Snowflake recommends the instructor-led Snowflake Data Engineering Training and the Data Clean Room Quickstart. Specific data sharing, enrichment, and security functionality is dependent on the customer’s specific data clean room configuration.

- Professional Services. Another option is to work with Snowflake Professional Services.

- Snowflake Partners. A third option is to work with a partner like Habu. A quick demo illustrates how Habu facilitates the setup of new clean rooms between parties.

Data Clean Rooms at Snowflake Summit

In keeping with the theme of The World of Data Collaboration, Snowflake Global Data Clean Room capabilities played a big role at the recent Snowflake Summit in Las Vegas.

Please check out the keynote “Go Further Together with Data Cloud Collaboration” for a demo on the new capabilities and a customer testimonial from NBCU, and the keynote “Building in the Data Cloud – Machine Learning and Application Development” for more detail on how to create advanced data clean rooms with Snowflake’s native application development capabilities.

Summit Keynote: Go Further Together with Data Cloud Collaboration

Summit Keynote: Building in the Data Cloud – Machine Learning and Application Development